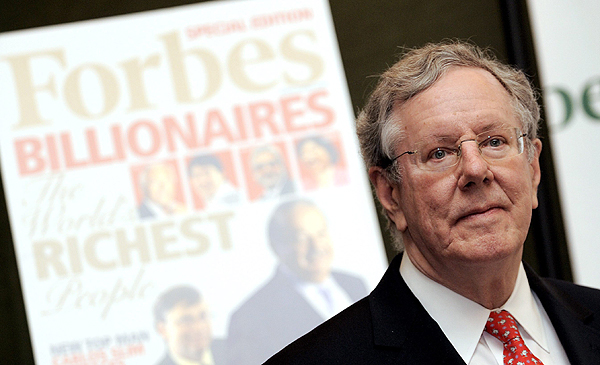

Bert Dohmen S Instagram Twitter Facebook On Idcrawl

Instagram Dan Facebook Tumbang Warganet Teriak Di Twitter Bidirectional encoder representations from transformers (bert) is a language model introduced in october 2018 by researchers at google. [1][2] it learns to represent text as a sequence of vectors using self supervised learning. it uses the encoder only transformer architecture. We introduce a new language representation model called bert, which stands for bidirectional encoder representations from transformers. unlike recent language representation models, bert is designed to pre train deep bidirectional representations from unlabeled text by jointly conditioning on both left and right context in all layers.

Bert Dohmen S Instagram Facebook Tiktok On Idcrawl Bert is a bidirectional transformer pretrained on unlabeled text to predict masked tokens in a sentence and to predict whether one sentence follows another. the main idea is that by randomly masking some tokens, the model can train on text to the left and right, giving it a more thorough understanding. Bert (bidirectional encoder representations from transformers) stands as an open source machine learning framework designed for the natural language processing (nlp). the article aims to explore the architecture, working and applications of bert. illustration of bert model use case what is bert?. In the following, we’ll explore bert models from the ground up — understanding what they are, how they work, and most importantly, how to use them practically in your projects. Bert is a deep learning language model designed to improve the efficiency of natural language processing (nlp) tasks. it is famous for its ability to consider context by analyzing the relationships between words in a sentence bidirectionally.

Bert Dohmen Instagram Facebook Tiktok On Idcrawl In the following, we’ll explore bert models from the ground up — understanding what they are, how they work, and most importantly, how to use them practically in your projects. Bert is a deep learning language model designed to improve the efficiency of natural language processing (nlp) tasks. it is famous for its ability to consider context by analyzing the relationships between words in a sentence bidirectionally. Bert is a model for natural language processing developed by google that learns bi directional representations of text to significantly improve contextual understanding of unlabeled text across many different tasks. Bert is a game changing language model developed by google. instead of reading sentences in just one direction, it reads them both ways, making sense of context more accurately. Bert, which stands for bidirectional encoder representations from transformers, is a groundbreaking model in the field of natural language processing (nlp) and deep learning. Bidirectional encoder representations from transformers, or bert, is a game changer in the rapidly developing field of natural language processing (nlp). built by google, bert revolutionizes machine learning for natural language processing, opening the door to more intelligent search engines and chatbots.

Perbedaan Facebook Twitter Dan Instagram Romeltea Online Bert is a model for natural language processing developed by google that learns bi directional representations of text to significantly improve contextual understanding of unlabeled text across many different tasks. Bert is a game changing language model developed by google. instead of reading sentences in just one direction, it reads them both ways, making sense of context more accurately. Bert, which stands for bidirectional encoder representations from transformers, is a groundbreaking model in the field of natural language processing (nlp) and deep learning. Bidirectional encoder representations from transformers, or bert, is a game changer in the rapidly developing field of natural language processing (nlp). built by google, bert revolutionizes machine learning for natural language processing, opening the door to more intelligent search engines and chatbots.

Meme Facebook Dan Instagram Down Bertebaran Di X Twitter Gopos Id Bert, which stands for bidirectional encoder representations from transformers, is a groundbreaking model in the field of natural language processing (nlp) and deep learning. Bidirectional encoder representations from transformers, or bert, is a game changer in the rapidly developing field of natural language processing (nlp). built by google, bert revolutionizes machine learning for natural language processing, opening the door to more intelligent search engines and chatbots.

Comments are closed.